🔑 Key Takeaways

- Real-Time AI Interpretation: Google's "Live Search" moves smart cameras from passive recording to active, conversational understanding of live scenes.

- Subscription-Driven Evolution: The feature is gated behind the $20/month Google Home Premium Advanced plan, signaling a shift towards premium, service-based smart home revenue.

- Contextual Intelligence Leap: Broader Gemini updates show significant progress in spatial and contextual awareness for device control, reducing user friction.

- Privacy & Compute Paradigm: Live analysis raises critical questions about data processing locations, consent models, and the balance between convenience and surveillance.

- Strategic Market Positioning: This update is a direct challenge to Amazon's Alexa and Apple's HomeKit, aiming to establish Google as the leader in AI-powered ambient computing.

The Dawn of Conversational Vision in the Home

The smart home landscape has just witnessed a pivotal evolution, one that transcends simple voice commands and scheduled routines. Google's latest infusion of its Gemini artificial intelligence into the Google Home platform, headlined by the "Live Search" capability, represents a fundamental shift from reactive automation to proactive, perceptive assistance. For years, home cameras have served as digital sentinels, capturing footage for later review. Today, Google is teaching them to see, comprehend, and report in real-time. This isn't merely an update; it's the embryonic stage of a truly ambient, context-aware living environment.

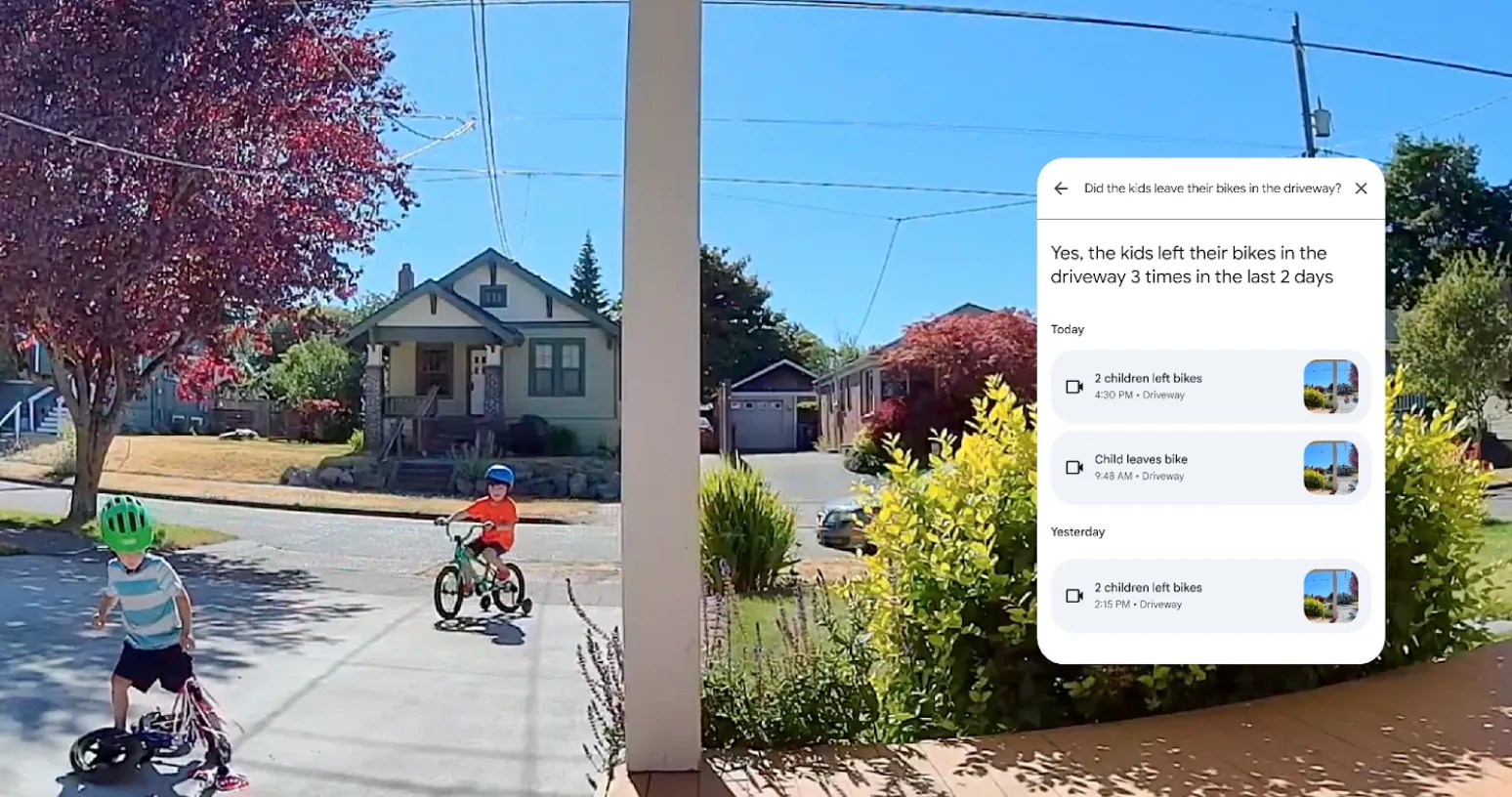

The core proposition is elegantly simple yet technically profound: users can now pose questions about the immediate visual state of their home. Queries like "Is the back door open?" or "Are the kids playing in the yard?" are processed not against a database of past events, but against a live video stream. This requires a sophisticated orchestration of on-device sensors, edge computing, and cloud-based large multimodal models (LMMs) working in concert with minimal latency. It marks a departure from the "record now, ask later" model that has dominated home security, venturing into the realm of "ask now, understand now."

Deconstructing the "Live Search" Technology Stack

To appreciate the significance of Live Search, one must look under the hood. This feature is the culmination of advancements across several domains of computer science. First, it relies on highly efficient, low-latency machine vision models that can run persistently on camera hardware or a local hub, identifying objects, people, animals, and activities. This initial parsing creates a structured data stream—a semantic understanding of the scene—which is then sent to the more powerful Gemini models in the cloud for natural language query resolution.

The magic lies in the alignment between the visual data and the user's intent. When you ask, "Is there a package on the porch?", Gemini isn't just scanning pixels; it's understanding the concepts of "package," "porch," and spatial relationship "on." This multimodal reasoning, where AI synthesizes information from different data types (image + language), has been a holy grail in AI research. Its deployment in a consumer product, albeit behind a paywall, signals a new level of maturity. Furthermore, the accompanying updates to Gemini for Home, which improve general knowledge and contextual targeting of devices (e.g., "turn off the kitchen" affecting only lights), indicate a holistic upgrade to the platform's reasoning engine, making the entire smart home ecosystem more intuitive and less literal.

The Premiumization of Privacy and Convenience

Google's decision to place Live Search exclusively within the $20/month or $200/year Advanced tier of Google Home Premium is a strategic masterstroke with multiple layers. It transforms the smart home from a capital expenditure (buying hardware) into a recurring software and service revenue stream, a model that has proven immensely lucrative in enterprise software and is now colonizing consumer tech. This move also creates a tangible value ladder, encouraging users of free services to upgrade for advanced capabilities.

However, this monetization strategy is inextricably linked to the elephant in the room: privacy. Continuous live analysis by an AI is a qualitatively different data proposition than motion-triggered clips stored in the cloud. It necessitates a constant flow of visual data to Google's servers for processing. The premium subscription likely helps offset the massive computational costs of running Gemini on millions of simultaneous video feeds, but it also frames privacy as a premium feature. The implicit bargain is that paying customers are funding a more robust, secure, and perhaps ethically governed data handling process. This establishes a new paradigm where advanced AI convenience is not free, forcing consumers to consciously weigh its value against both monetary cost and perceived data risk.

Broader Implications: The Race for Ambient AI Dominance

This update cannot be viewed in isolation. It is a calculated move in the high-stakes battle for dominance in ambient computing—the idea that technology recedes into the background, anticipating needs without explicit commands. Amazon Alexa, with its ambient intelligence features and Ring camera ecosystem, has long focused on proactive alerts (e.g., "A person was detected"). Google's Live Search counters by adding a conversational, interrogative layer, making the intelligence interactive rather than just broadcast.

Apple's approach, centered on HomeKit and a staunch commitment to on-device processing and privacy, presents a contrasting philosophy. Google's cloud-centric model enables more powerful, constantly improving AI but requires trust in the cloud. Apple's model offers greater privacy assurances but may lag in raw AI capability and feature speed. Google's latest gambit pressures both competitors: Amazon to match its conversational depth, and Apple to justify its premium hardware with equally intelligent, yet private, alternatives. The ultimate winner will be the platform that best balances three pillars: powerful intelligence, seamless usability, and trustworthy data stewardship.

Future Trajectories and Unanswered Questions

Looking forward, Live Search is merely the first step on a longer path. The logical progression includes predictive actions. If Gemini can see a delivery driver approaching, could it not only tell you but also unlock a smart parcel box? If it sees an elderly relative hasn't moved from their chair at a usual time, could it send a gentle check-in? The line between assistance and intervention becomes blurry, raising ethical questions about automation and consent.

Furthermore, the accuracy and bias of these visual recognition systems will be under immense scrutiny. Misidentifying a pet as an intruder or failing to recognize a family member could erode trust instantly. The "updated models" Google mentions must be relentlessly trained on diverse, real-world home environments to avoid such pitfalls. Finally, the integration of this live visual understanding with other data streams—audio, calendar, location—will pave the way for a truly holistic AI home manager, one that doesn't just answer questions about what it sees, but anticipates needs based on a complete sensory picture of the home and its inhabitants.

In conclusion, Google's update is more than a list of new features. It is a declaration of intent. By giving Gemini eyes that can see and describe the present moment, Google is not just upgrading a product; it is actively constructing the foundational layer for the next era of human-computer interaction—one where our living spaces become perceptive, responsive partners in daily life. The journey towards that future is now live, and the questions it prompts are as important as the answers it provides.

About This Analysis

This in-depth analysis was crafted to provide context, technical insight, and forward-looking perspective beyond the basic announcement of Google Home's new Live Search feature. It examines the strategic, technological, and societal implications of embedding advanced multimodal AI into the fabric of everyday life. The original news report served as a catalyst for this broader exploration of the future of ambient computing.